6 now with my comment. It’s 200% more lively here!

borari

Cybersecurity professional with an interest in networking, and beginning to delve into binary exploitation and reverse engineering.

- 2 Posts

- 9 Comments

6·1 year ago

6·1 year agoI’m not 100% sure on the answer to that.

Twitter relies on Google Cloud to host services…

So I’m assuming that means that Twitter is either using GCP to host cloud-based internally developed services, or SaaS deployments in the cloud, but that’s just a complete guess on my part.> n Musk’s takeover. Since “at least” March, Twitter has been pushing to renegotiate the contract

Edit - This section was in the next paragraph lol.

Now, Platformer has reported that a Twitter service called Smyte—an automated anti-abuse and anti-harassment tool that was previously operating on Google Cloud Platform (GCP)—will potentially shut down on June 30. This could lead to a flood of spam bots and CSAM on Twitter as bots and content could fail to be removed.

So it sounds like it’s an internally built Twitter service that they host in GCP.

4·1 year ago

4·1 year agoScroll to the bottom of your page and click the Instances link. For you it should be

https://beehaw.org/instances.

9·1 year ago

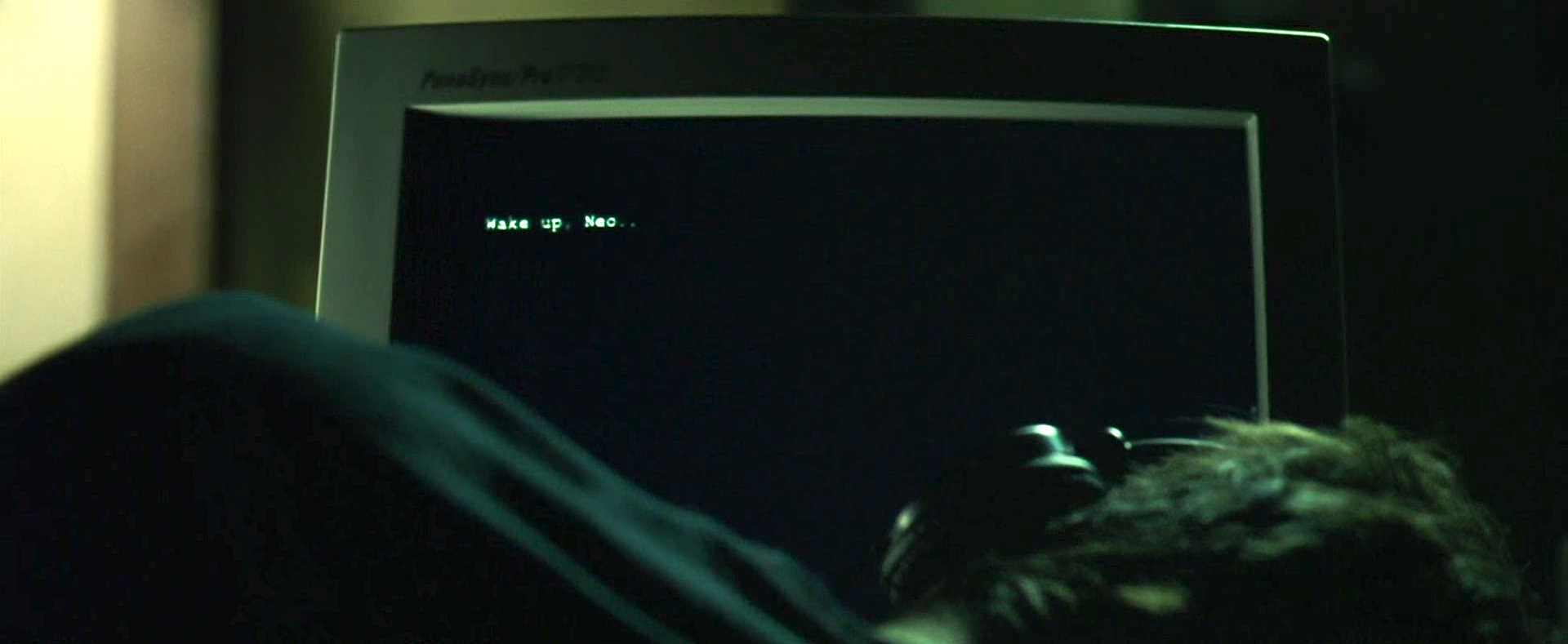

9·1 year agoWell, this isn’t exactly how I saw the Singularity Church of the MachineGod getting started, I’m still here for it.

1·1 year ago

1·1 year agoSo I just noticed this behavior while sitting at the top of the All/New feed on my instance. I noticed that the surge of “new” posts that came in were all pretty old, and all from one or two communities on another instance. I’m now wondering if maybe there’s some sort of race condition going on with the interaction between the database and the front-end, where these old posts are being considered “new” when they’re initially pulled in to the local instance db.

3·1 year ago

3·1 year agoThe tool cannot be liable itself, obviously, but the creators of the tool and those who wield it absolutely can…

I absolutely agree with you here. The creators of the tool are responsible for its content. I’m a complete supporter of Section 230 in the US, but I absolutely do not think that sort of protection should apply to companies like OpenAI. Their tool created the content, their tool “published” the content, they are responsible for that content.

4·1 year ago

4·1 year agoYou can check the mod logs, they are public and there’s a link to them at the bottom of every page. I have certainly seen the mods of lemmy.ml giving out temp and permabans for rule-breaking behavior. I have even seen them hand out lengthy (4-5 day temp bans) to users who seem to post content that is much more closely aligned to the lemmy.ml admins world views than the other party in the conversation leading to the ban. I think the accusations you’re making here are pretty off base.

7·1 year ago

7·1 year agoIt’s planned for tomorrow. No time was given in the announcement post.

I’m just going to add that the web ui on mobile is great. Good enough that I’ve stopped using mlem. Mlem doesn’t show you the different instances that users and communities are coming from which doesn’t really matter for users but is super annoying for communities, and the main dev said that’s intentional. It also shows you your “karma”, through what I’m assuming is just adding up the raw up/downvotes your posts/comments have accrued. Seeing that is what ultimately made me bounce, it seems like the complete antithesis of what Lemmy is trying to be about.

Also, while they’re working on adding a NSFW blur, it doesn’t exist yet and fuck seeing all that loli ai porn on my feed. I don’t mind having to scroll past stuff I’m not interested in, but come on at least blur it.

Finally the web ui has the rainbow indent lines, while there’s nothing but whitespace to indicate child comments on mlem. I’m sure they’ll fix most of the stuff up given time, but I’m not using it until they dump the cumulative karma tracking.