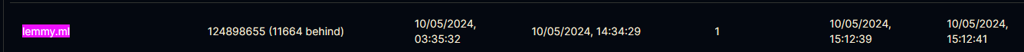

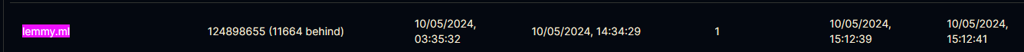

Hmm, lemmy.ml is lagging a bit from lemmy.world, but not that much to explain 2 months delay

Hmm, lemmy.ml is lagging a bit from lemmy.world, but not that much to explain 2 months delay

The model is just hallucinating in this case.

All translations are LLM translations by this point I believe.

Yes, just grab any recent LLM like Mistral-7B and ask it to translate for you. A local client is here https://github.com/LostRuins/koboldcpp but you might need a good GPU to get quick answers.

Alternatively use https://lite.koboldai.net to use someone else’s computer.

Yes you can use the API of lemmy. There’s also SDKs for python and javascript

Goddamn, lazier than an ai bot?

We already have a summirizer bot around. Why you trying to put it out of business like that?

Looks like there’s plenty of ground and from what I read about vaxry, they seem to be a bigot at best and a cryptofash at worst.

This is the right answer

I disabled the bot. The state of the software is just too unstable atm and causes unexpected bugs like these.

It appears as if the original developer was told to wait for weeks

My bot has a readme with instructions and we’re working on a docker image too: https://github.com/db0/threativore

Some instances (like mine and lemmings.world) have automated bots to clean up these spam posts everywhere. I think lemmy world has an automod as well, but maybe it’s not working as well if you keep seeing these spam posts?

Its practically been all my free time in the past 14 years

If you want to be able to use your models from everywhere sefurely, then koboldcpp on the ai horde is your best option. Super easy to set up

Someone’s about to discover the Streisand Effect

Yes, but those changes are not unique to the ansible 1.3.0 deployment

The lemmy frontend will come up fast, but you would see an error and a popup telling you that lemmy is still starting. If you can see content, then the DB migration is finished already. The 1.3.0 branch of ansible doesn’t have any changes which would cause performance impact. Currently it just adjusts the readme and adds pictrs 0.4.7.

What if that train is regularly running under capacity, or you are just standing?

I’m never joining another vc-backed social network whatever they promise.