Ok. Did a bunch more testing here tonight.

I have 2x 8tb SAS drives that have worked for several weeks now. Those show up in bios reliably regardless of which connector I use. So I think the HBA is working and the cables are good.

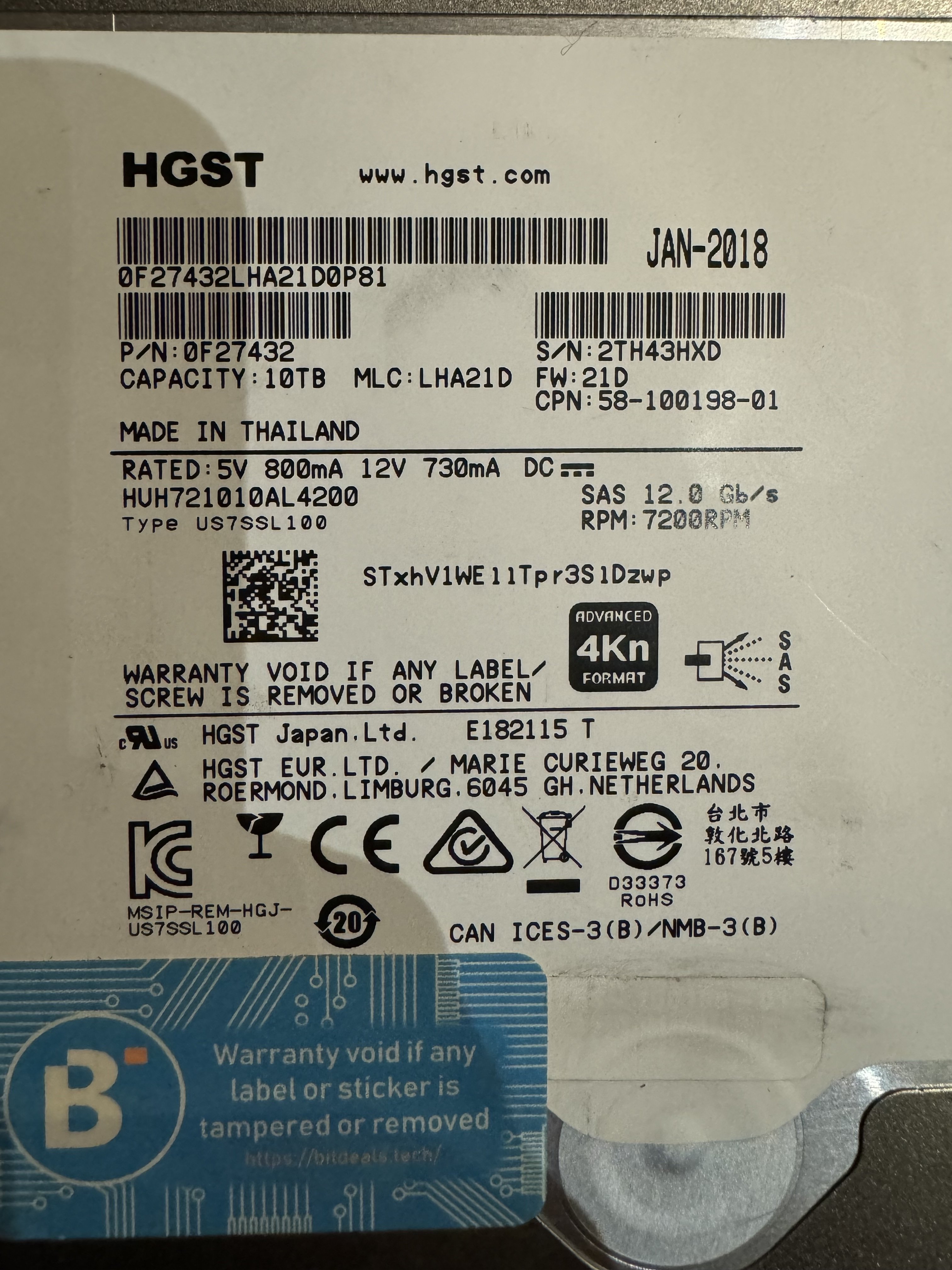

Of the 10x 10tb drives, 1 drive shows up reliably. It also seems to work on any connector I use. But it is the only drive the works.

Here is 8 of the 10 installed and only one showing in BIOS. I think the other 7 are not even spinning up.

An additional weird thing is that at least 1 other of the 10 drives did show up the first time I plugged it in from inside the xpenology VM. I did ‘hot swap’ that one in, but it then passed a SMART test. But since it passing the test and me pulling it, it hasn’t worked again. I’m also not positive which drive it is because originally I wasn’t expecting things to behave so weirdly, so I didn’t start taking notes…

As another test, I got a hold of a 9305-16i to see if the drives would read on that. And while that card would show up in BIOS, none of the drives – including the 8th that have always worked – showed up or spun up at all! I wonder if that card is not compatible with my mobo?

Is it possible other BIOS settings are interfering?

I’m not a lawyer, but if I wasn’t planning to do anything related to the other ‘dedsec’, I wouldn’t even consider what the owner of that dedsec would think.

Businesses have the same name ALL THE TIME.

Unless you’re trying to piggyback on or undermine that other dedsec, I, the non-lawyer, can’t imagine how they’d have any standing to raise a concern.

Whatever you’re doing must have led you to the dedsec name (I assume it’s a website for dog-eat-dog security), there’s just no way in my (non-lawyer) mind that EA or Ubisoft has any right to stop you from saving dogs from being eaten.

I (the non-lawyer) would 100% not pay a lawyer for their opinion on this.