Gimp might be able to perform that little logo-transformation favour for you libre of charge, but at least give it a call after for heaven’s sake.

- 4 Posts

- 72 Comments

5·2 months ago

5·2 months agoStorage box is self-serviced storage on a single server, as far as I’m aware. If you need replication, you need to rent storage at a second location and do it yourself.

2·2 months ago

2·2 months agoI have a Raspberry Pi 3 with a Hifiberry DAC running OSMC (nicely packaged Kodi on top of Debian) acting as my media center and recently installed Jellycon with the hopes of being able to use server side transcoding for a few formats my old TV doesn’t support.

My verdict: Menu navigation is slow, but it’s a native kodi integration (supports widgets) and playback works great once you made your way through the menus. You can selectively set transcoding options per file type which is exactly what I needed.

Best solution I’ve seen so far, as it also does IR remote passthrough over HDMI if your TV supports it. The addon works in any kodi setup of course. I think there might be a way to start playback from the Jellyfin web UI but haven’t bothered with it. This would fully remedy the menu slowness, I think.

1·3 months ago

1·3 months agoThe answer seems to always be “not segmented enough”. ;)

1·3 months ago

1·3 months agoHaha, why do I even ask.

1·3 months ago

1·3 months agoThis is a good hint, I’m going to take a look at that. Thank you!

2·3 months ago

2·3 months agoI never specified, I think, and probably wasn’t too clear on it myself. Thanks for your insights, I’ll try to take them to my configuration now.

3·3 months ago

3·3 months agoThis is exactly the type of answer I was looking for. Thanks a bunch.

So but in that way, having a proxy on the LAN that knows about internal services, and another proxy that is exposed publicly but is only aware of public services does help by reducing firewall rule complexity. Would you say that statement is correct?

2·3 months ago

2·3 months agoRight, I agree with proxy exploit means compromised either way. Thanks for your reply.

I am trying to prevent the case where internal services that I don’t otherwise have a need to lock down very thoroughly might get publicly exposed. I take it it’s an odd question?

Re “bouncer”: Expose some services publicly, not others, discriminated by host with public dns (service1.example.com) or internal dns (service2.home.example.com), is what I think I meant by it. Hence my question about one proxy for internal and one public, or one that does both.

3·3 months ago

3·3 months agoRight, I could have been more precise. I’m talking about security risk, not resilience or uptime.

“It’ll probably be the most secure component in your stack.” That is a fair point.

So, one port-forward to the proxy, and the proxy reaching into both VLANs as required, is what you’re saying. Thanks for the help!

2·3 months ago

2·3 months agoThe services run on a separate box; yet to be decided on which VLAN I put it. I was not planning to have it in the DMZ but to create ingress firewall rules from the DMZ.

1·3 months ago

1·3 months agoOne proxy with two NICs downstream? Does that solve the “single point of failure” risk or am I being overly cautious?

Plus, the internal and external services are running on the same box. Is that where my real problem lies?

14·3 months ago

14·3 months agoselfh.st is an independent publication created and curated by Ethan Sholly. […] selfh.st draws inspiration from a number of sources including reddit’s r/selfhosted subreddit, the Awesome-Selfhosted project on GitHub, and the #selfhosted/#homelab communities on Mastodon.

and also

This Week in Self-Hosted is sponsored by Tailscale, trusted by homelab hobbyists and 4,000+ companies. Check out how businesses use Tailscale to manage remote access to k8s and more.

This list is under the Creative Commons Attribution-ShareAlike 3.0 Unported License. Terms of the license are summarized here. The list of authors can be found in the AUTHORS file. Copyright © 2015-2024, the awesome-selfhosted community

Here’s the

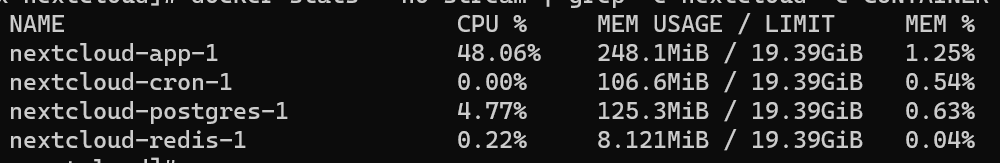

docker statsof my Nextcloud containers (5 users, ~200GB data and a bunch of apps installed):

No DB wiz by a long shot, but my guess is that most of that 125MB is actual data. Other Postgres containers for smaller apps run 30-40MB. Plus the container separation makes it so much easier to stick to a good backup strategy. Wouldn’t want to do it differently.

2·4 months ago

2·4 months agoThis is the setup I have (Nextcloud, Keepass Desktop, Keepass2android+webdav) and k2a handles file discrepancies very well. I always pick “merge” when it is informing me of a conflict on save. Have been using it like that for years without a problem.

Edit: added benefit, I have the Keepass extension installed in my Nextcloud, so as long as I can gain access to it, I have access to my passwords, no devices needed.

31·4 months ago

31·4 months agoPage loading times, general stability. Everything, really.

I set it up with sqlite initially to test if it was for me, and was surprised how flaky it felt given how highly people spoke about it. I’m really glad I tried with postgres instead of just tearing it down. But my experience is highly anecdotal, of course.

3·4 months ago

3·4 months agoYou can do batch operations in a document view. Select multiple documents and change the attributes in the top menu. Which commands are you missing?

281·4 months ago

281·4 months agoSlow and unreliable with sqlite, but rock solid and amazing with postgres.

Today, every document I receive goes into my duplex ADF scanner to scan to a network share which is monitored by Paperless. Documents there are ingested and pre-tagged, waiting for me to review them in the inbox. Unlike other posters here, I find the tagging process extremely fast and easy. Granted, I didn’t have to bring in thousands of documents to begin with but started from a clean slate.

What’s more, development is incredibly fast-moving and really useful features are added all the time.

2·5 months ago

2·5 months agoYou know your stuff, man! It’s exactly as you say. 🙏

Gimp believes in you and loves you in a non clingy way.